Decision Tree Analysis in R

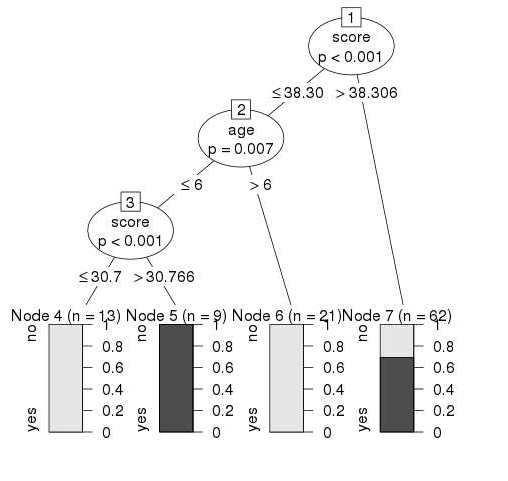

The root node is the starting point or the root of the decision tree. Decision tree is a type of algorithm in machine learning that uses decisions as the features to represent the result in the form of a tree-like structure.

Decision Tree Classifier Implementation In R Decision Tree Tree Decisions

Rs rpart package provides a powerful framework for growing classification and regression trees.

. Expand until you reach end points. Decision trees are intuitive. Decision tree is a graph to represent choices and their results in form of a tree.

The nodes in the graph represent an event or choice and the edges of the graph represent the decision rules or. To see how it works lets get started with a minimal example. Keep adding chance and decision nodes to your decision tree until you cant.

Decision tree is a graph to represent choices and their results in form of a tree. It represents the entire population of. A decision tree is defined by parent-child pairs ie.

Decision Trees have many different algorithm implementations in R tree rpart party and in Weka J48 LMT DecisionStump and different algorithms are likely to produce. It is a common tool used to. Denote the probability of.

Let us take a look at a decision tree and its components with an example. R - Decision Tree. From-to connections and the probability and associated value eg.

The nodes in the graph represent an eve. All they do is ask questions like is the gender male or is the value of a particular variable higher than some. Cost of traversing each of the connections.

Mapping both potential outcomes in your decision tree is key. Introduction to Decision Trees.

Decision Tree In R Step By Step Guide

R Decision Trees Tutorial Examples Code In R For Regression Classification Datacamp

R Decision Trees Tutorial Examples Code In R For Regression Classification Datacamp

No comments for "Decision Tree Analysis in R"

Post a Comment